Harmful Content on Social Media that Can Damage Your Brand Reputation

As the influence of social media in our lives continues to grow, so does the need for brands to be vigilant about their online presence. It is crucial for businesses to be aware of the harmful content that circulates on social media platforms. Whether it's spam, hate speech, violence, or brand-damaging content, these incidents can swiftly spread like wildfire, tarnishing the reputation you've worked tirelessly to build.

Consequently, understanding the potential risks and implementing effective strategies to mitigate them has become a paramount concern for brands in order to thrive in the ever-evolving digital landscape.

Almost all types of damaging content can also be seen in comments to the ads of some of the most popular brands. Spreading like a parasite, these comments can completely block all real interactions with customers and make them wonder why is the company not doing anything. Any advertising manager should ask him/herself a question: Is this what I aim to promote?

So what kind of content is truly harmful? The short answer is, it depends on how you want to approach things.

For the longer answer, we invite you to read forward. This blog post will illustrate what we have identified as the most common types of negative content based on the millions of comments we have encountered over the years in our experience of managing social engagement for big companies.

Examples of Harmful Content on Social Media

Some content is inarguably universally harmful, such as spam, scam, violent comments, imagery, or notoriously vague hate speech. Apart from being offensive to users, this type of content stifles any meaningful interaction among users. Thus also watering out any productive content on the page.

Spam and scams

These two are sadly still common problems across the web, and the phenomenon does not seem to be dying out anytime soon. While it is becoming rarer to have the once-in-a-lifetime opportunity to get money by helping a foreign prince in need, you can still pay for a spell to win back your lover or chat up a love-deprived bot. We think the advertiser should be able to control whether they want to pay to promote this kind of content.

Comments and images depicting violence

Sometimes accounts on social media are used simply just for the shock value that can be achieved with violent imagery posted on various pages, written or in picture format. As this content is most assuredly not safe for work, we will refrain from going into too much detail. Let’s just say that whether it is an argument between users, or directed towards the brand, we believe no one should be exposed to wishes and depictions of suicide, violence, mutilation, or sexual harassment online.

Hate speech

This was one of the buzzwords of 2017 and seems like it will be prevalent during 2018 as well. While courts and social media giants are struggling to find a definitive answer to the question of what should be done about it on a global scale, the tools to monitor blatant racism, sexism and other types of discriminatory content are already there. While the question of the relationship between free speech and hate speech is a complex one and yet largely undecided, most would agree with us that the brand’s ad spend should not go into promoting this type of content.

Scrolling through the wastelands of spam chains makes most users quickly click away, whereas discriminating and hateful language alienates potential customers and existing community members.

The reality is that all pages receive this type of content, the important matter is how it is dealt with, and if it is dealt with at all.

However, while removing the above types of content goes a long way to nurture and encourage meaningful experiences and engagement online, there are other types of content that in itself, might not be offensive, but can still harm your brand.

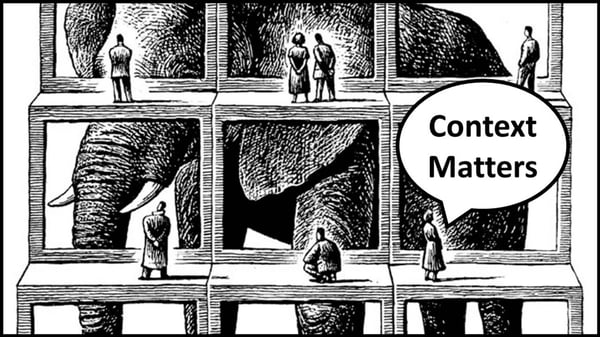

Contextually Harmful Content

We have written before about the reputation damage that harmful comments can cause to your ads, and we know the content described above covers only some of the different issues brands encounter on social media. The other part of the damaging content is the comments that we call contextually harmful.

Imagine that you run a family-friendly brand or a specific product. Maybe a video game, an industry struggling with notoriously toxic communities, or a children’s clothing brand. You might want to be stricter and enforce clean language policies suitable for little eyes. This means that suddenly profanity becomes harmful to your page.

Perhaps you are offering a media service online, with area-locked services. This means that now the harmful content you should be screening for includes messages giving other users tips related to account misuse and piracy.

Pharma brands should not enable users to discuss folk remedies or other medications with each other, as these conversations can potentially cause serious physical harm to the users, and big legal harm to the brand.

A retailer may receive an extremely negative customer review on their social media page or under their ad campaign. While these legitimate concerns naturally need to be addressed, the cold fact is that users rarely bother editing their posts after their issue is solved. This means these stories will be dragged along your ad campaign, forever. On the other hand, positive stories may also go unnoticed in the comments, and be drowned under upset customers, or spammers.

A beauty brand might wish to tackle the controversial topic of animal testing and combat any and all false information that can do irreparable damage to their image. At the very least most would like to be informed of this topic on their social properties.

The list goes on.

What these topics have in common is that they are not as such what people would consider harmful content. They are topics and discussions that can and do harm the brand and their campaigns. We have a blog post where we dive deeper into 8 common mistakes when running campaigns on social media.

And while it may be that Facebook, Instagram, and the global community manage to find a way of combating hate speech, measures they take will not address these issues.

That is why we are here to help. We already monitor, take action on, and analyze the content mentioned above. We want you to know what people are saying on your properties, and enable you to have full control of what kind of content can stay on your page and what happens to it.

Curious about how BrandBastion can help you get started with moderating harmful content? Read more about our Lite Platform and start a free trial:

Auto-moderate harmful content

Facebook, Instagram, Youtube, TikTokAds & Organic covered (including FB Dynamic Ads)

START FREE TRIAL