What are scam and spam bots and how to fight them

In today's digital age, social media platforms have become a breeding ground for hackers, scams, and spam bots, posing a significant threat to brands and users alike. These malicious actors and bots can infiltrate conversations, spread false information, and compromise both the brand and user security. In this blog post, we will delve into the world of scam and spam bots, explore their impact on social media, and provide valuable insights on how to fight them effectively.

What are spam bots on social media?

Social media platforms attract millions of users daily and unfortunately, they also attract hackers, pirates, trolls, scam and spam bots. These bots are automated accounts created to mimic human behavior and engage in deceptive activities. While they may appear like genuine users, their primary purpose is to carry out malicious actions, such as spreading spammy links, disseminating fake news, or conducting phishing attacks.

|

Nowadays, no one is safe from social media villains and falling victim to their ploys. In 2016, hackers unlocked the Twitter account of Hootsuite CEO Ryan Holmes. Under the cover of night, they laid a trap for unsuspecting contacts, posting “Hey, it’s OurMine Team, we are just testing your security, please send us a message.” IT experts confirmed that they’d entered through a Foursquare hack, crossing into Twitter as Holmes had previously enabled the application.

The fact that the chief of a social media management company could fall victim to these ploys shows how adept hackers have become. He joins the ranks of Twitter co-founder Evan Williams and Facebook co-founder and CEO Mark Zuckerberg himself, who was hacked for the third time in 2016.

.png?width=63&height=63&name=spam%20(1).png) |

Why are scam and spam bots an issue?

Spam bots gained significant attention in recent news when Elon Musk's announcement about potentially withdrawing from the Twitter acquisition highlighted concerns about the platform's transparency regarding the prevalence of bots. However, certain bots serve a positive purpose by enhancing users' online experiences. For instance, on Twitter, there are bot accounts specifically designed to offer real-time weather updates or track stock prices.

Nevertheless, numerous bots operate with malicious intentions, and spam bots are often used to target and undermine brands, which pose a significant issue for both brands and users. Here's why:

-

Diminished trust: Bots can create a sense of distrust among social media users and consumers by polluting the online space with spammy content, false news, negative comments, fake customer complaints, or misleading information about a brand. This can undermine the credibility of brands and discourage genuine users from engaging with the brand.

-

Brand reputation at stake: Brands with a strong social media presence and high engagement rates are particularly vulnerable to the negative impact of spam bots. These bots can tarnish a brand's reputation by associating it with fraudulent or unethical practices, such as sharing enticing deals with fraudulent links, leading to a loss of customer trust and loyalty.

-

User security risks: Spam bots often engage in phishing attacks, attempting to steal personal information or spread malware. Users who unknowingly interact with these bots may fall victim to scams or have their sensitive data compromised. When such incidents occur, it not only harms the individuals affected but also reflects negatively on the brand associated with the bots.

|

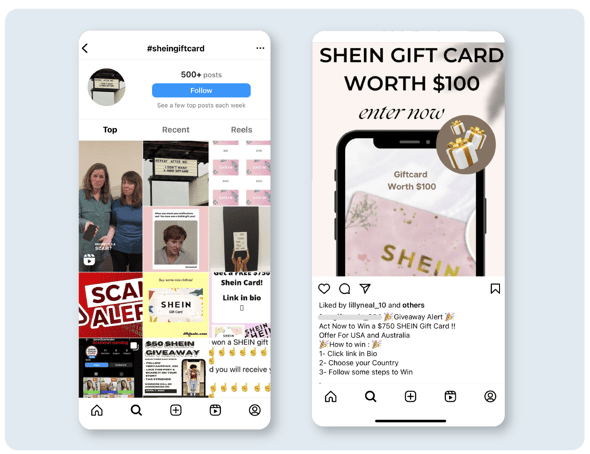

One of the latest scams to hit Instagram is the SHEIN Gift Card scam. This scam begins with a comment from an unknown account on an Instagram post congratulating the user for winning a SHEIN gift card or tagging them to participate in a giveaway. The scammers provide a link in the Instagram bio that leads to a fake SHEIN website, aiming to deceive unsuspecting users.

One of the latest scams to hit Instagram is the SHEIN Gift Card scam. This scam begins with a comment from an unknown account on an Instagram post congratulating the user for winning a SHEIN gift card or tagging them to participate in a giveaway. The scammers provide a link in the Instagram bio that leads to a fake SHEIN website, aiming to deceive unsuspecting users.

How to identify and remove social media scam and spam bots

There are some key indicators to watch out for when identifying scam and spam bots: irrelevant or repetitive messages, unusual engagement patterns, low-quality profiles, and unnatural language. You can delete or hide these comments, but even if you try blocking and reporting bot accounts, this won't be a sustainable solution for your brand, as new ones will emerge even faster.

This is why social media comment management platforms like BrandBastion Lite come into play to help you proactively protect your brand reputation and community. A key feature for this purpose is the auto-moderation powered by AI —in other words, automated hiding for spam, offensive, inappropriate, and against-brand comments.

How to stop bots on social media: Automatically hide harmful comments under your posts/ads

To begin, it's important to be aware that scam and spam bots can infiltrate both your organic posts and sponsored posts. Monitoring the presence of these bots becomes particularly challenging in the case of sponsored posts, as they may be managed by external parties handling your ad campaigns or different in-house teams.

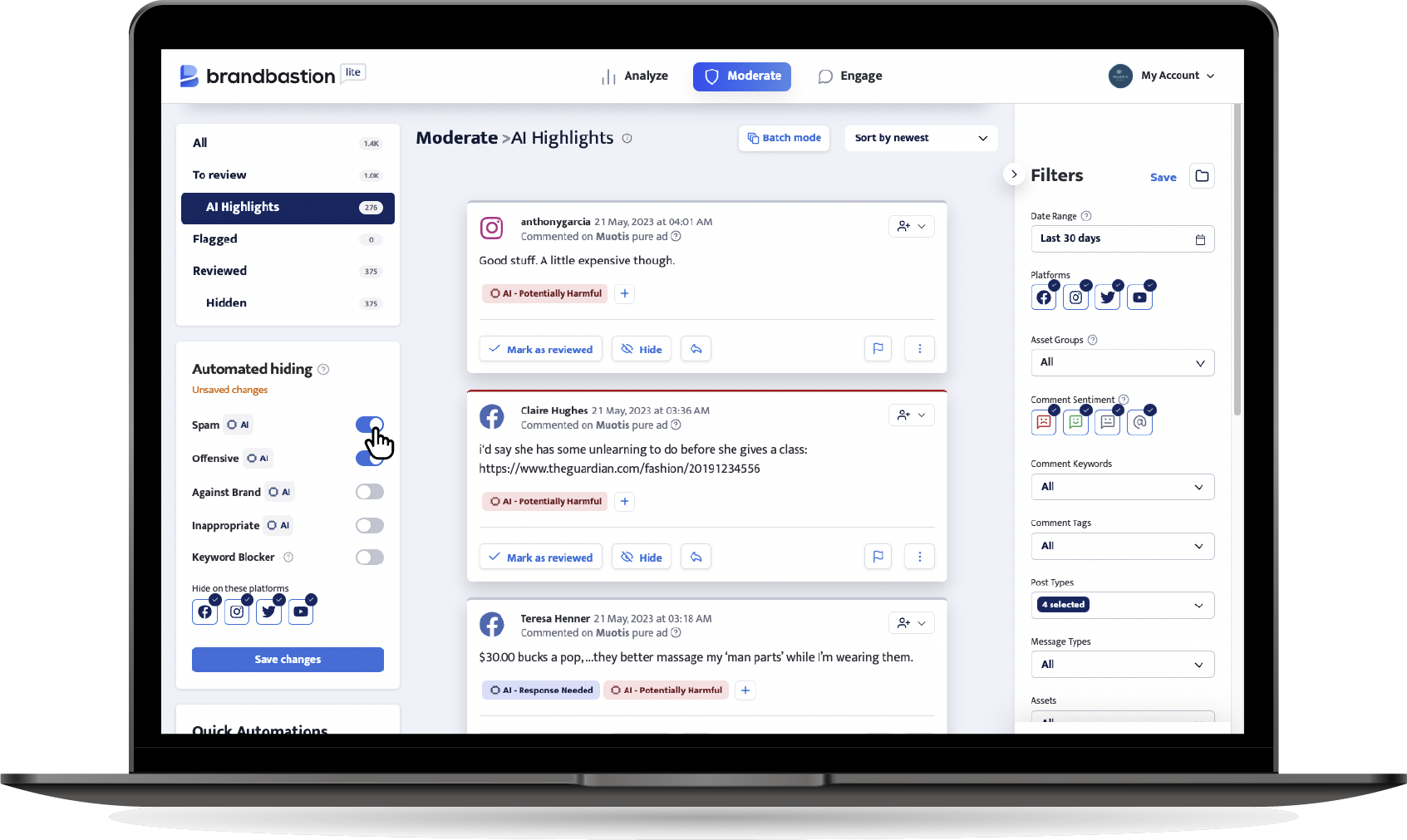

BrandBastion Lite helps you monitor and manage all your social media conversations in one place. Our AI identifies harmful comments toward your brand and your community, and you can turn on automated moderation in a few clicks:

BrandBastion Lite enables automated hiding for spam, offensive, inappropriate, and against-brand comments found by our AI.

Want to start moderating damaging and unwanted comments? Start a 30 day free trial with the BrandBastion platform!

Moderate with BrandBastion

BrandBastion lets you monitor and moderate in real-time paid and organic interactions across platforms like TikTok, Facebook, Instagram, LinkedIn, X and YouTube.